Tech's Impact on Kids (The Digital Experiment) and The Environment

If we look at the billions of dollars spent on data centers, AI, and smartphones, the biggest "customer" isn't an adult in an office—it’s a kid in their bedroom. For a middle schooler in 2026, these resources change how their brain grows and how they see the world.

For better context we need to understand the overall story.

As of early 2026, social media has reached a level of global saturation that makes it one of the most resource-intensive human activities on the planet. To estimate the impact, we have to look at the chain from user habits to the "physical" internet—the data centers.

1. The Global User Base (2026)

As of 2026, the number of active social media users is estimated at 5.66 billion. This represents approximately 69% of the global population and over 93% of all internet users.

- Average Usage: The typical user spends about 2 hours and 23 minutes daily across 7–8 different platforms.

- The Big Players: Facebook remains the largest (3.22B monthly users), followed closely by YouTube (2.85B), WhatsApp/Instagram (3B each), and TikTok (1.7B).

2. Data Uploaded and Stored Daily

The volume of data created daily is staggering, driven largely by the shift from text to high-resolution video (4K) and short-form content (Reels, TikToks, Shorts).

- Daily Data Generation: Estimates suggest that roughly 402 million terabytes (0.4 zettabytes) of data are created, captured, or consumed daily across the entire internet. Social media accounts for a significant portion of this traffic.

- Social Content Snapshots:

- Facebook: ~350 million pieces of content shared daily.

- Snapchat: ~4.7 billion "Snaps" shared daily.

- Video Dominance: Video now accounts for 82% of all global data traffic. A single hour of 4K YouTube streaming can consume up to 18GB of data.

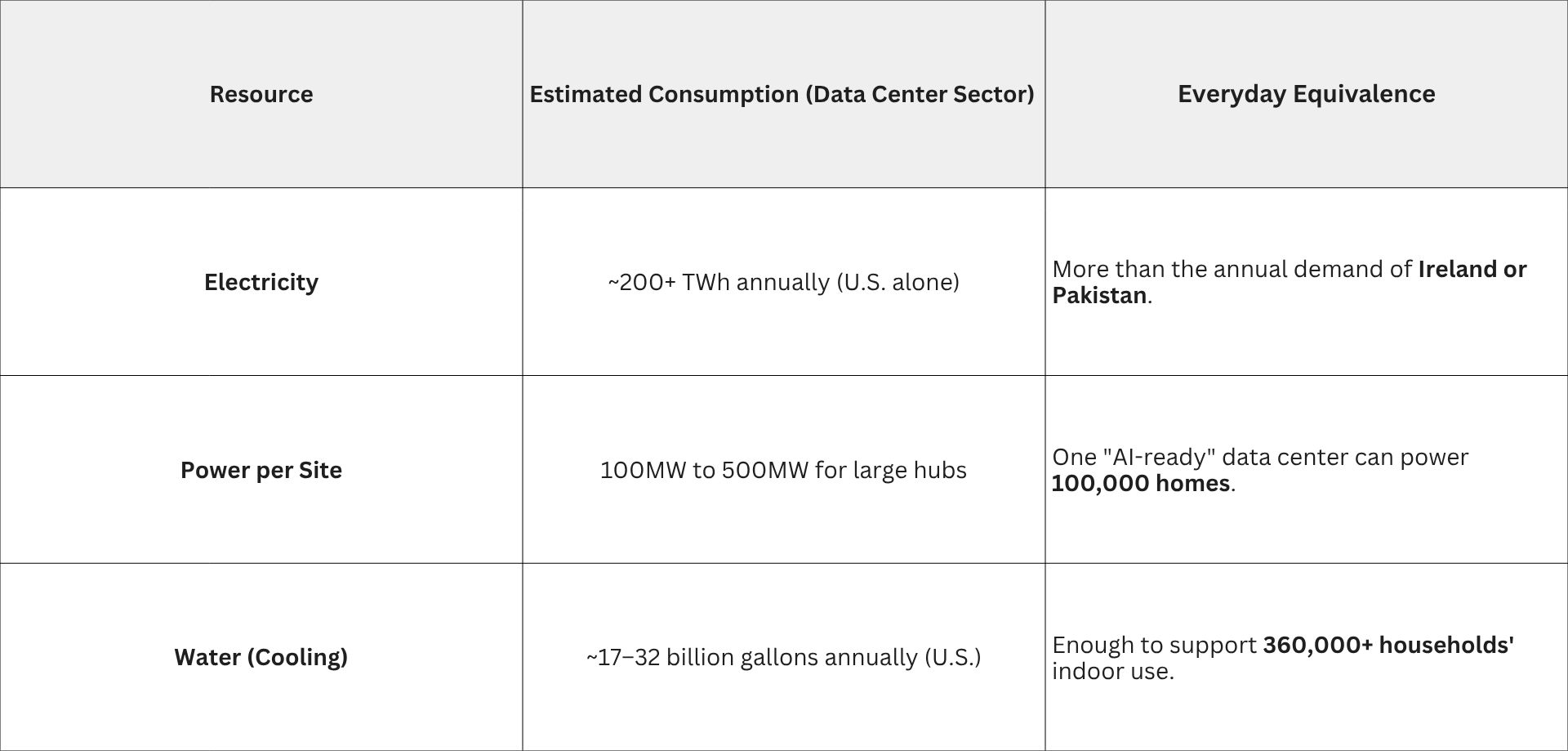

3. Resource Equivalence (Electricity & Water)

To store and process this data, "hyperscale" data centers operate 24/7. Their resource consumption is often compared to entire cities or nations.

The "Soda Straw" Effect: Large data centers can consume up to 5 million gallons of water a day for evaporative cooling—roughly what a city of 50,000 people uses.

4. Environmental Effects (The Carbon Footprint)

Every minute you spend scrolling has a measurable carbon cost. While a single "like" is negligible, the aggregate of 5.66 billion users is profound.

- Carbon per Minute: * TikTok: ~2.63g CO_2e per minute.

- Reddit: ~2.48g CO_2e per minute.

- Pinterest/Instagram: ~1.1g to 1.3g CO_2e per minute.

- Annual Individual Impact: Using social media for the global average (145 mins/day) generates approximately 60kg of CO_2e per year per person.

- Total Global Impact: 5.66 billion users X 60kg CO_2e = 340 million metric tons of CO_2 annually.

- This is roughly equivalent to the annual carbon emissions of the entire United Kingdom or 80 million gasoline-powered cars on the road for a year.

Summary

While we perceive social media as "weightless" and digital, it is supported by a massive physical infrastructure of steel, concrete, and energy. In 2026, the transition to AI-integrated feeds and high-definition video has nearly doubled the energy requirements of these platforms compared to five years ago, making digital sustainability a primary concern for global climate goals.

The integration of AI into social media has fundamentally changed the "physics" of data consumption. While early social media was about retrieval (fetching an existing photo), AI-driven social media is about generation and compute-heavy curation.

As of 2026, the shift from static algorithms to Generative AI (GenAI) and AI-curated video feeds (like TikTok’s recommendation engine or Meta’s "Discovery" AI) has multiplied the resource cost per user.

1. The Energy "Markup" of AI

Every time an AI recommends a video or generates a custom filter, it performs inference. This requires much more power than a simple database search.

- Standard Search vs. AI Query: A standard search uses roughly 0.3 Wh. A single multimodal AI query (like generating an AI image or a complex video recommendation) can use 2.9 Wh to 10 Wh—nearly 10 to 30 times more energy.

- The Scale: Across 5.66 billion users, if every user interacts with an AI feature just 10 times a day, the incremental energy demand is roughly 58 GWh per day—enough to power the city of San Francisco for several days.

2. Resource Equivalence: The "AI Thirst"

AI models don't just "run"; they generate immense heat, requiring specialized cooling infrastructure.

- Water Usage: Training a large model like GPT-4-class AI is estimated to consume 700,000 liters of fresh water for cooling. However, inference (daily use) is where the volume sits.

- The "Five-Drop" Rule: In 2026, a single AI-driven text interaction consumes about 0.26 mL of water (about 5 drops). While that sounds small:

- 1,000 AI-enhanced scrolls = 260 mL (a small glass of water)}.

- Multiply this by billions of users, and AI data centers are now projected to require up to

1.45 billion gallons of water daily for cooling—roughly the daily supply of New York City.

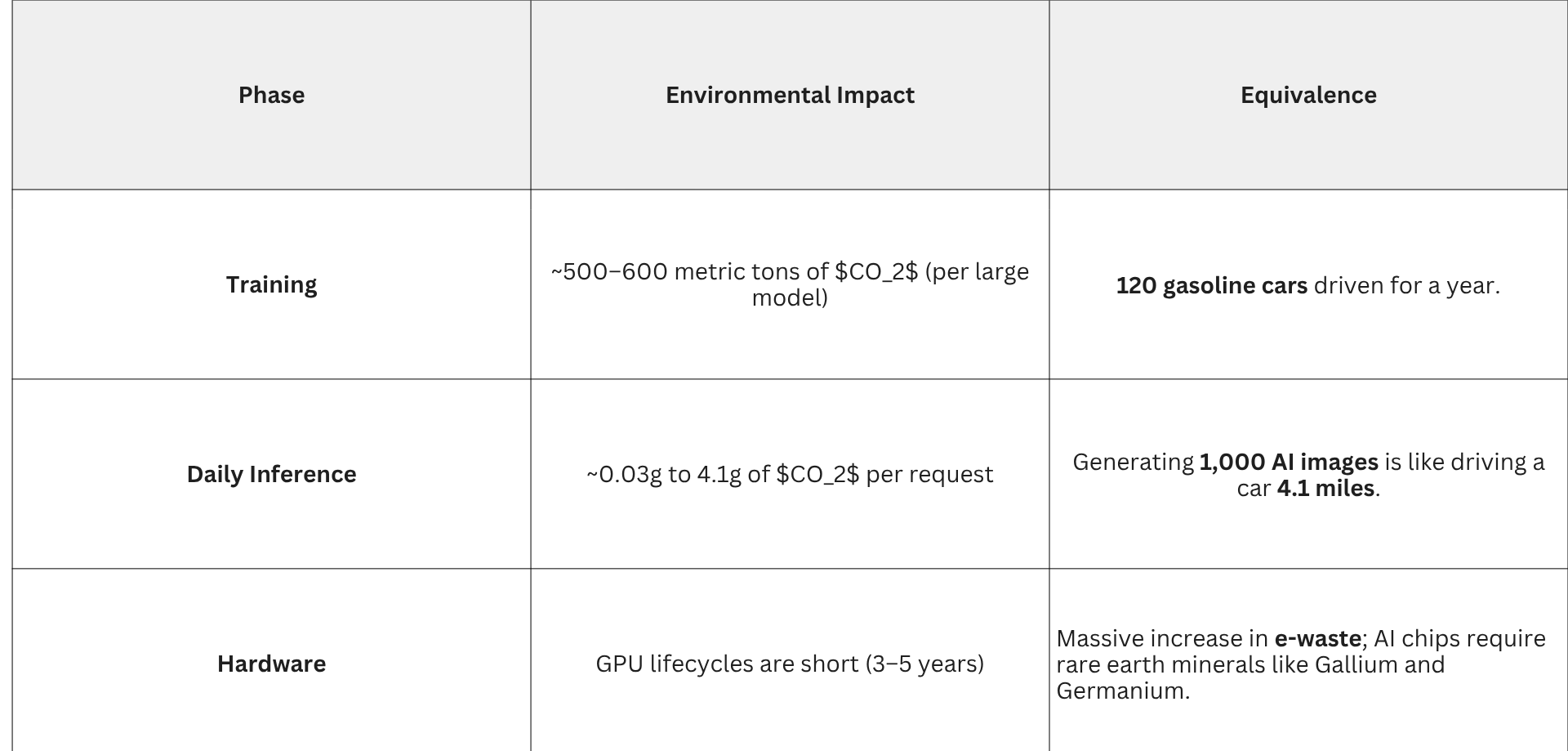

3. Environmental Effects: The Carbon Multiplier

The environmental cost of AI is split into two phases: Training (the birth of the AI) and Inference (its daily life on your phone).

4. The 2026 Paradox: Efficiency vs. Demand. There is a tug-of-war happening in the tech world:

- Efficiency Gains: Providers like Google and Meta have reduced the carbon footprint per AI prompt by over 30% since 2024 through better hardware.

- Jevons Paradox: As AI becomes more efficient and "cheaper" to use, social media platforms use more of it. In 2026, we see "AI-everything"—from AI-generated background music in Reels to real-time AI translation in live streams.

- Bottom Line: By 2026, AI is no longer a "feature" of social media; it is the engine. This engine has turned data centers into the 5th largest energy consumers in the world (if they were a country), positioned between Japan and Russia in terms of total demand.

The "digital weight" of a single user has increased from a few grams of CO_2 per day in the text-era to a significant daily footprint that rivals small physical appliances.

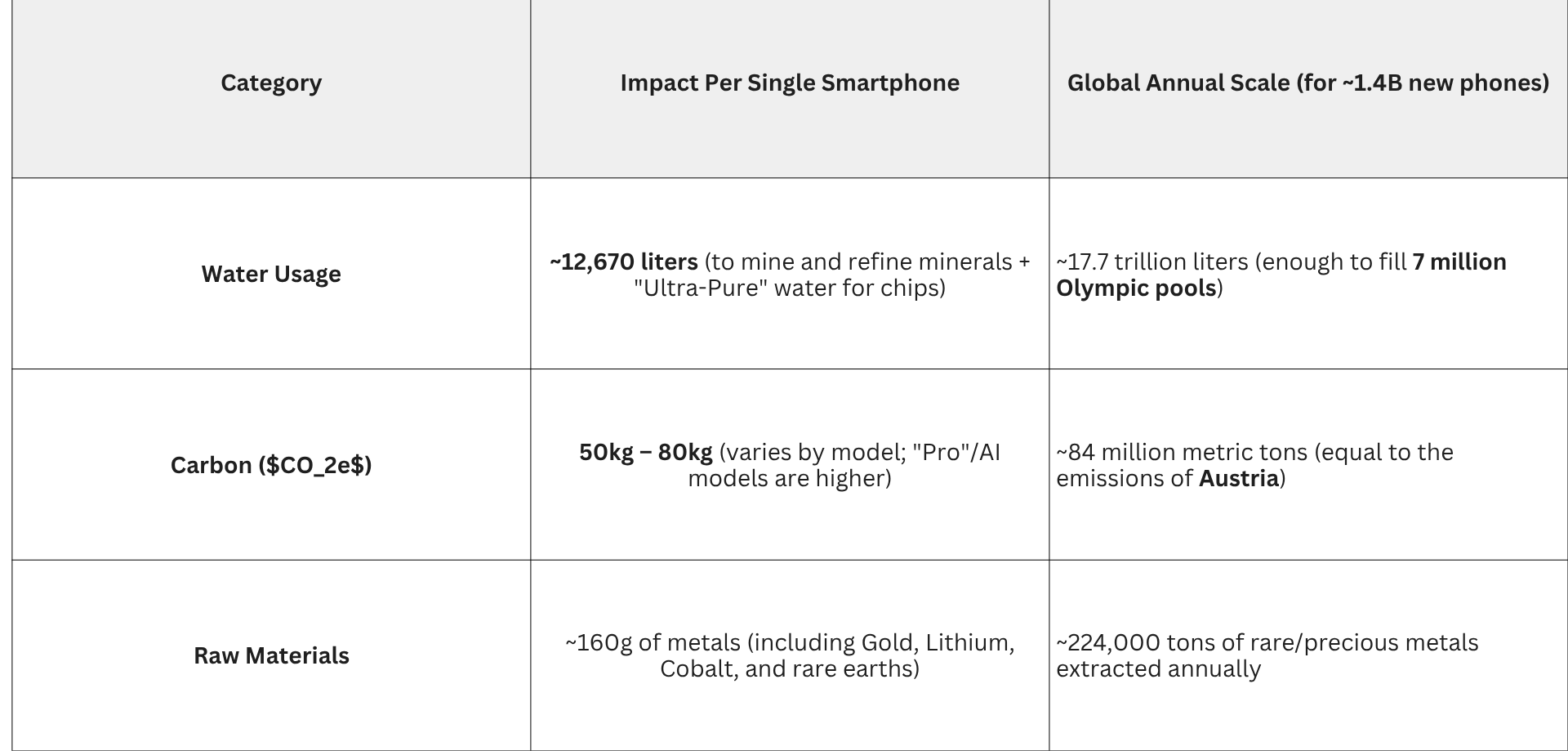

Adding the smartphone to this equation completes the "cradle-to-grave" environmental picture. While data centers and AI are the invisible engines, the smartphone is the physical gateway—and it carries a disproportionately high environmental "debt" before you even turn it on for the first time.

By 2026, the global fleet of smartphones has grown in both number and complexity, largely to accommodate the high-performance AI we discussed previously.

1. The Global "Fleet" (2026)

As of early 2026, the scale of mobile hardware is unprecedented:

- Unique Users: ~5.83 billion people (70% of the global population).

- Devices in Use: ~7.64 billion smartphones (many users carry multiple devices for work/personal use).

- Replacement Cycle: Despite a push for "right to repair," the average smartphone is still replaced every 2.5 to 3 years, leading to a massive manufacturing treadmill.

2. The "Embedded" Resource Debt

Unlike a data center, where the impact is mostly from

usage (electricity), a smartphone’s impact is mostly from

birth (manufacturing). Roughly

80–85% of a phone's lifetime carbon footprint is generated before it leaves the factory.

The "AI Tax": To run 2026-era on-device AI, phones now require more RAM (12GB–16GB standard). This extra memory alone adds about 10kg of CO_2e per device compared to 2024 models, as semiconductor fabrication is extremely energy-intensive.

3. Daily Usage: The Hidden Connection

Your phone doesn't just use the battery in your pocket; it acts as a remote control for a massive network.

- Charging: Charging a phone daily uses very little energy—about 7–10 kWh per year (less than $5 of electricity).

- Data Transport: The real energy cost is moving the data. Moving 1GB of data across cellular networks and through data centers uses approximately 0.2 kWh.

- If you watch 2 hours of high-def social video a day (~4GB), your "remote" energy consumption is 292 kWh/year—the equivalent of running a full-sized modern refrigerator for a year.

4. The End-of-Life Crisis: E-Waste

In 2026, electronic waste has become the fastest-growing waste stream in the world.

- Global Volume: We are generating over 65 million metric tons of e-waste annually.

- The Recycling Gap: Only about 20% of smartphones are formally recycled. The rest end up in landfills or informal "urban mines" in developing nations.

- Toxic Leakage: A single smartphone battery, if damaged in a landfill, can contaminate up to 600,000 liters of water with lead, cadmium, and mercury.

The Final "Social Media Equation" (Per User)

To give you a concrete visual of your personal impact in 2026, here is the "cost" of one year of active social media presence:

- Hardware: 1/3 of a smartphone’s birth-cost (~20kg CO_2).

- Usage: Electricity to move your data and power the screen (~60kg CO_2).

- AI: Incremental "compute tax" for your personalized feed (~15kg CO_2).

Total: Approximately 95kg of CO_2e per year.

- Equivalence: This is like driving a gasoline car 240 miles (385 km) or burning 105 pounds of coal just to maintain your digital life.

- When you multiply that by 5.8 billion users, the "invisible" internet becomes one of the largest industrial footprints on Earth.

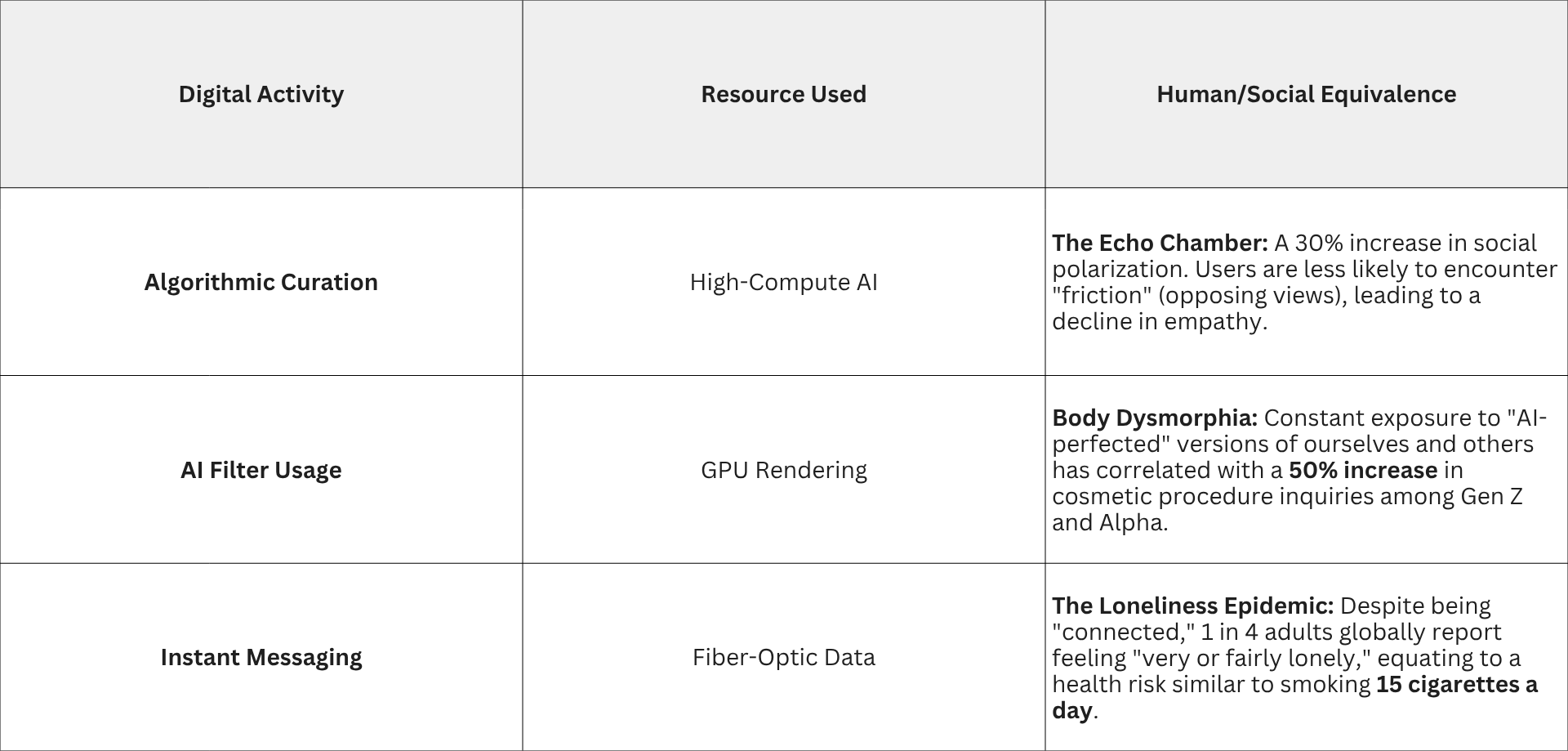

Chapter 2 is about the metabolic and psychological toll on the species. When we equate digital resources to human impact, we find that the "efficiency" of AI and social media has created a massive biological deficit.

In 2026, the primary resource being mined is no longer just lithium or cobalt—it is human cognition and time.

1. The "Attention Extraction" Equivalence

To fuel the data centers we discussed, platforms must keep users engaged. This creates an Attention-to-Dopamine Equation.

- The Resource: Human Time.

- The Equivalence: 5.66 billion users X 2.4 hours/day = 13.5 billion hours of human consciousness consumed every 24 hours.

- Human Impact: This is equivalent to the total lifespan of 19,000 people being "spent" every single day on platforms.

- The Result: A global "Sleep Debt." Studies in 2026 show that the blue light and algorithmic "infinite scroll" have reduced average global sleep by 45 minutes per night compared to the pre-smartphone era, leading to a measurable spike in cortisol (stress hormone) levels across the population.

2. The Cognitive "Offloading" to AI

As AI becomes the primary way we generate text, images, and even opinions, we are seeing a "muscle atrophy" of the human brain.

- The Substitution: We equate AI-generated content with human thought.

- The Resource: Neural Plasticity.

- Human Impact: A "Critical Thinking Deficit." When an AI summarizes a complex issue or generates a response for us, the prefrontal cortex—the part of the brain responsible for complex planning and decision-making—shows decreased activity.

- The Equivalence: By offloading memory to the "cloud" and creativity to "generative models," the average person in 2026 has a 40% lower recall rate for basic information compared to 2006. We aren't losing intelligence, but we are becoming "biologically tethered" to the hardware.

3. The Digital "Isolation Paradox"

The energy used to connect us has, ironically, increased the resources required for emotional regulation.

4. The Economic & Labor Shift

The resources used by AI don't just impact the climate; they impact the value of human labor.

- The Displacement: In 2026, AI can perform 60–70% of "entry-level" digital tasks (copywriting, basic coding, graphic design).

- The Equivalence: For every 1,000 MW of power added to an AI data center, roughly 50,000 "knowledge worker" roles are redefined or rendered redundant.

- Human Impact: This has created a "Cognitive Class Divide." Those who own or control the "Compute" (the energy and chips) accumulate wealth at a rate 10x faster than those who sell their own manual or mental labor.

5. Summary: The Biological "Carbon Tax"

Every "free" social media account actually charges a tax paid in biological resources:

- Sleep: Deducted from physical recovery.

- Focus: Fractured into 15-second intervals (the "TikTok Brain" effect).

- Community: Replaced by parasocial relationships with influencers and AI bots.

The 2026 Equivalence: If we treated human attention like a natural resource (like oil or water), we would conclude that we are "over-drilling." The human nervous system was not evolved to process the data output of 100,000 GPUs. We are effectively running "Paleolithic hardware" (our brains) on "Space-age software," and the system is overheating.

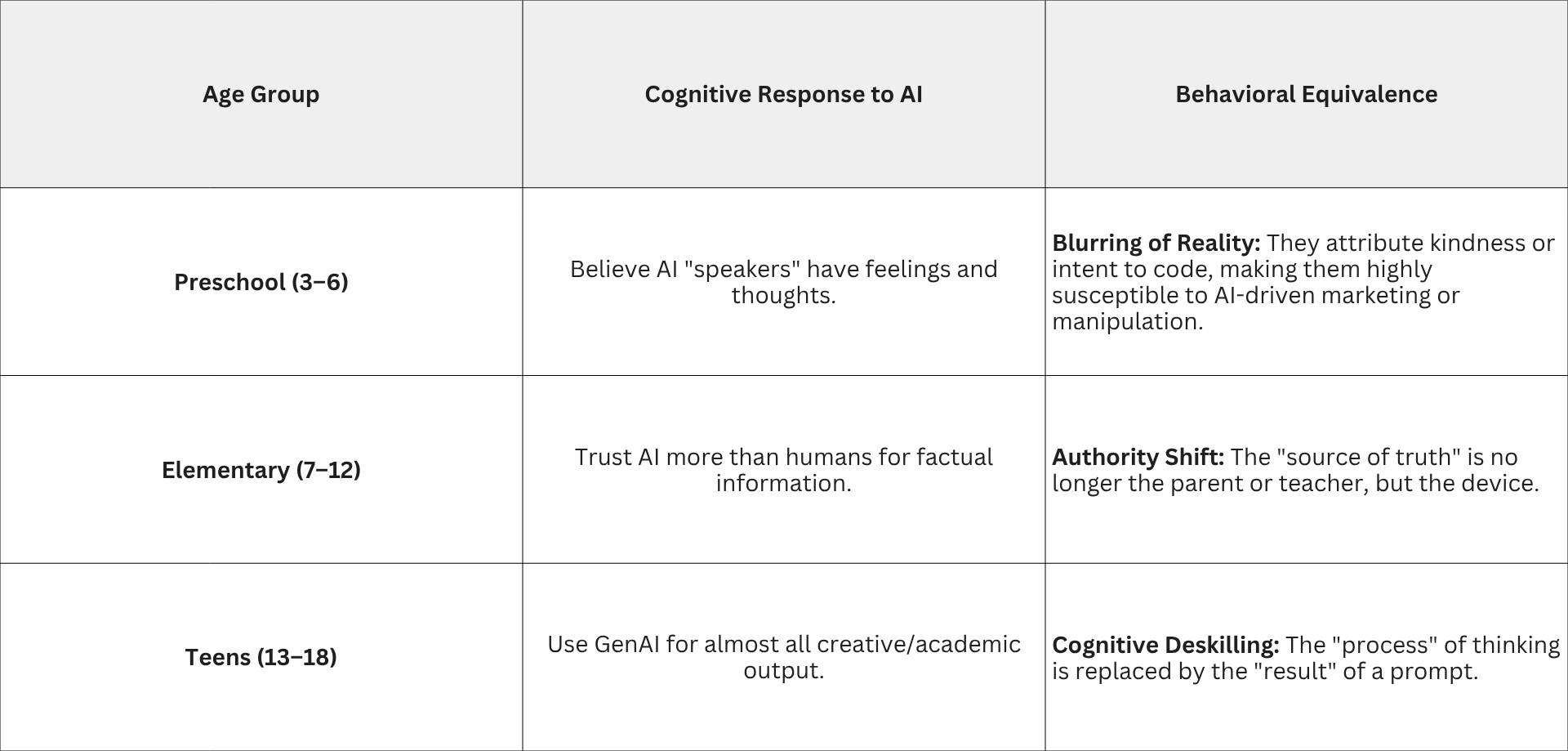

Chapter 3 is arguably the most critical. If adults are "overheating" (as we discussed in Chapter 2), children are essentially being "forged" by these digital resources. In 2026, we are seeing the results of the first generation to have AI integrated into their lives from the nursery upward. Here is the equation of how these physical and digital resources translate into developmental reality.

1. The "Plasticity" Equation: Brain Wiring

The human brain is a "muscle" that adapts to its environment. When a child’s environment is dominated by high-speed, AI-curated content, the brain’s architecture literally shifts.

- The Input: ~8 hours of daily screen time (average for 2026 tweens).

- The Physical Change: MRI studies show decreased organization in the white matter tracts responsible for language and executive function (planning/focus).

- The Equivalence: For every hour of passive AI-curated video consumed, there is a measurable lag in vocabulary and reading development. Essentially, the brain is becoming "wired" for high-speed processing (reflexes) at the expense of "deep-work" circuitry.

2. The "Friction" Deficit (Social-Emotional)

Social development requires "friction"—the difficult experience of navigating a disagreement with a peer. AI is designed to be frictionless.

- The AI Companion Effect: By 2026, many children use AI "friends" (chatbots) to vent or play. These bots never get angry, never say "no," and always validate the child.

- The Resource Substitution: Simulated empathy (AI) is being substituted for authentic empathy (Human).

- The Human Impact: Children are losing the ability to "repair" human relationships. If a real friend is difficult, they retreat to the AI. This is creating a generation that is socially risk-averse—they struggle with the "messiness" of real human interaction because they’ve been trained by a sycophantic machine.

3. The "Truth" Threshold

In 2026, the resource of "Verifiable Reality" is becoming scarce for children.

4. Physical Equivalence: The "Biological Debt"

We can equate the digital usage of a child directly to their physical well-being:

- Sleep Debt: The 2026 average of 1.5 hours of social media before bed equates to a loss of 1 hour of REM sleep. Over a school year, this is equivalent to missing 45 full nights of sleep, leading to chronic emotional dysregulation.

- Dopamine Baseline: Constant AI-notifications create a high "dopamine floor." Activities that used to be rewarding (reading a book, playing outside) now feel "boring" because they don't meet the resource-intensity of a TikTok feed.

5. Summary: The "Generational Experiment"

In 2026, we are essentially running a global experiment: What happens when we replace human mentorship with algorithmic curation?

The 2026 Outlook: We are seeing a "splitting" of the generation. Children with "Digital Boundaries" (limited AI/Social access) are showing higher resilience and cognitive depth, while those in "Unrestricted Environments" are showing higher processing speeds but significantly lower levels of empathy and persistent focus.

The resource cost isn't just the carbon from the data center; it's the

eroding of the "human-to-human" apprenticeship that has defined our species for millennia.

The Impact on Kids (The Digital Experiment)

If we look at the billions of dollars spent on data centers, AI, and smartphones, the biggest "customer" isn't an adult in an office—it’s a kid in their bedroom. For a middle schooler in 2026, these resources change how your brain grows and how you see the world.

1. Social Media: The "Dopamine Slot Machine"

Social media platforms are designed like high-tech video games to keep you watching.

- The Resource Used: Your Attention.

- The Equivalence: Watching a 15-second TikTok might seem like nothing, but the "For You" page uses more energy than a lightbulb just to guess what you want to see next.

- The Impact: Because kids' brains are still "under construction," they are extra sensitive to likes and comments. In 2026, the average teen gets over 200 notifications a day. This keeps the brain in a state of "high alert," making it really hard to focus on a math book or a conversation with a parent. It’s like trying to run a marathon while someone is constantly throwing confetti in your face.

2. The Inclusion of AI: The "Brain Shortcut"

AI is now built into every app. It writes your captions, suggests your replies, and even creates fake "friends" to talk to.

- The Resource Used: Your Problem-Solving Skills.

- The Equivalence: Using an AI to write your essay is like using an electric scooter to finish a gym class. You get to the finish line, but your muscles (your brain) didn't get any stronger.

- The Impact: When AI does the "hard thinking," kids can lose the ability to figure things out for themselves. By 2026, we see more kids struggling with "Boredom Intolerance." Since AI is always there to entertain or help, the second things get "boring" or "hard," the brain wants to quit.

3. Smartphones: The "Biological Tether"

For a kid, a smartphone isn't just a tool; it's practically a new body part.

- The Resource Used: Your Sleep and Physical Health.

- The Equivalence: The energy it takes to manufacture one smartphone is equal to the amount of water a person drinks in 10 years.

- The Impact: * The "Blue Light" Tax: Using a phone late at night tells your brain the sun is still up. This steals about one hour of sleep every single night.

- The "Ghost" Connection: Because the phone is always in your pocket, your brain is never truly "off." Even when you aren't using it, you're thinking about what you're missing. This leads to higher levels of anxiety and a feeling of being lonely even when you’re "connected" to a thousand followers.

The Final Comparison: The Human "Battery"

Think of your brain like a battery.

- The Digital World (Social Media + AI + Smartphones) is a fast-charger that actually wears the battery out over time.

- The Real World (Playing sports, reading, talking face-to-face) is a slow-charger that makes the battery last longer.

In 2026, kids are spending so much time on the "fast-charger" that their biological batteries are starting to drain faster. We are seeing more "burnt-out" 13-year-olds than ever before because the physical and digital resources of the world are being used to mine your time instead of just coal or oil.

The Architecture of Vulnerability

For parents navigating the 2026 landscape, understanding the digital impact on children requires looking past the user interface to the underlying "extraction engine." When we equate the resources of social media to child safety, we are discussing the systematic commodification of a minor's private life.

1. Data Harvesting and the "Digital Shadow"

Every interaction a child has with a smartphone—every dwell-time on a video, every private message, and every GPS coordinate—is a data point fed into a permanent "Digital Shadow."

- The Resource: Behavioral Surplus (Predictive Data).

- The Equivalence: By age 13, it is estimated that advertising firms hold approximately 72 million data points on a child. This is enough data to construct a psychological profile more accurate than one a pediatrician or teacher could provide.

- The Privacy Cost: In 2026, "Privacy" is no longer the absence of people watching; it is the absence of algorithms predicting. For children, this means their future preferences, weaknesses, and political leanings are being "pre-calculated" before they have even reached cognitive maturity.

2. AI-Enhanced Tracking and Geolocation

The 2026 smartphone is a precision tracking device. Beyond simple GPS, AI now uses "Sensor Fusion"—combining Wi-Fi signals, Bluetooth proximity, and even barometer readings—to determine a child’s location within inches.

- The Mechanism: "Precise Geolocation" data is often sold on the open market via third-party SDKs (Software Development Kits) embedded in seemingly innocent gaming or weather apps.

- The Danger: This data allows for "Pattern of Life" analysis. An external actor can determine not just where a child is, but where they will be at 3:30 PM on a Tuesday, creating a physical security vacuum that traditional "stranger danger" talks are unprepared to handle.

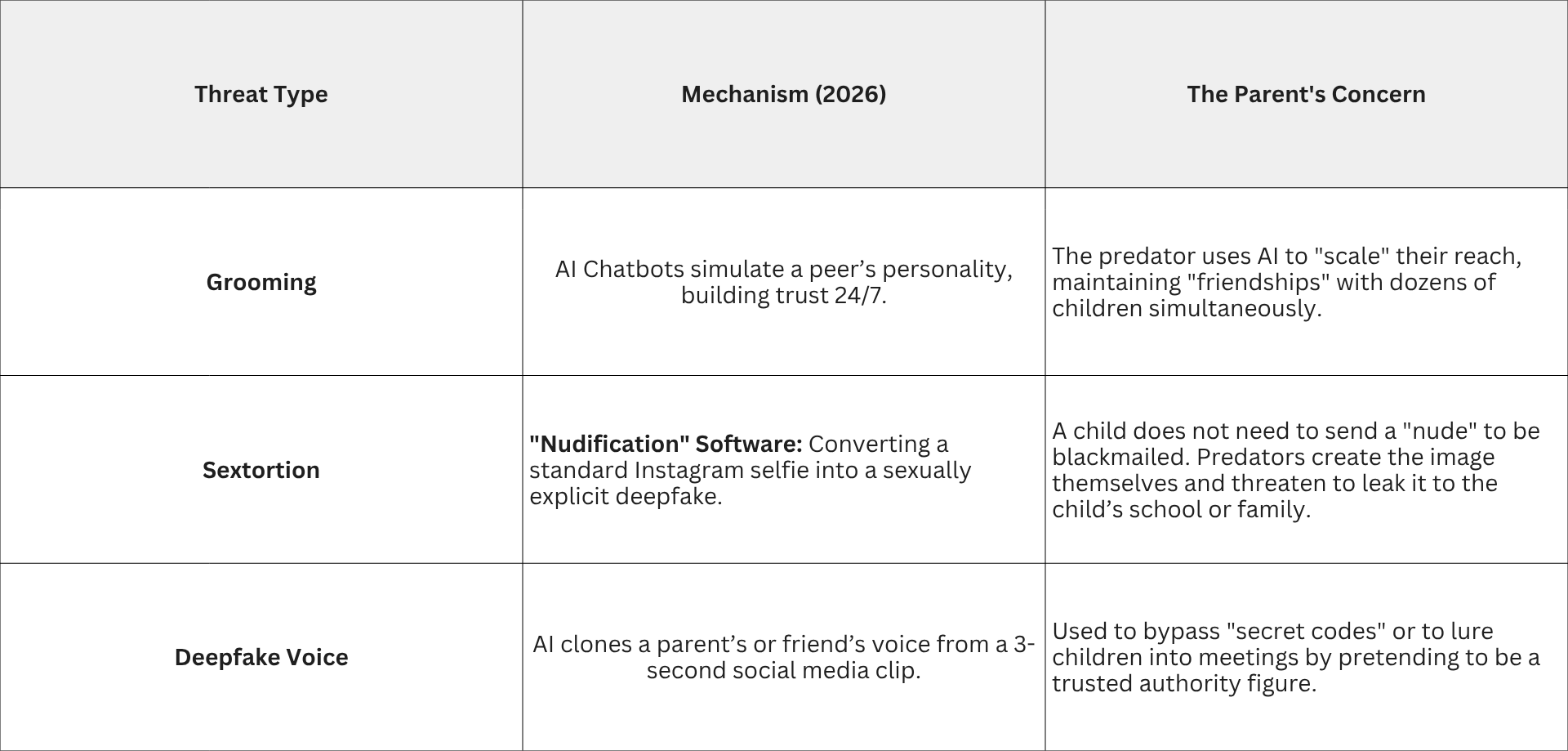

3. The Predator Paradigm: From Grooming to AI-Sextortion

The inclusion of Generative AI has revolutionized the "economics" of online predation. Predators no longer need to manually chat with hundreds of victims; they use AI-driven Automation.

2026 Reality Check: In the past year, over 1.2 million children globally reported their images being manipulated into sexually explicit deepfakes. This is no longer a fringe threat; it is a systemic byproduct of the "open-image" culture of social media.

4. Psychological Warfare: The "Feedback Loop"

Social media platforms use Intermittent Reinforcement—the same psychological mechanism used in slot machines—to keep children in an "appetitive state."

- The "Dark Pattern": Features like "Streaks" or "Read Receipts" are designed to create social anxiety.

- The Parental Equivalence: Allowing a child unrestricted access to an AI-curated feed is psychologically equivalent to leaving them in a room with a master negotiator whose only goal is to prevent them from leaving. The AI has more "compute power" than a child has "willpower."

Summary: The Protective Mandate

As highly educated gatekeepers, the 2026 parental strategy must shift from monitoring content to hardening the environment.

- Data Minimization: Treat every app download as a permanent surrender of your child’s psychological privacy.

- Zero-Trust Identity: Teach children that in an AI-saturated world, identity is fungible. If it’s on a screen, it could be a synthetic construct.

- Physical Supremacy: Re-establish the "Physical World" as the primary source of dopamine. The more "biological" a child’s life is, the less leverage the "digital extraction engine" has over them.

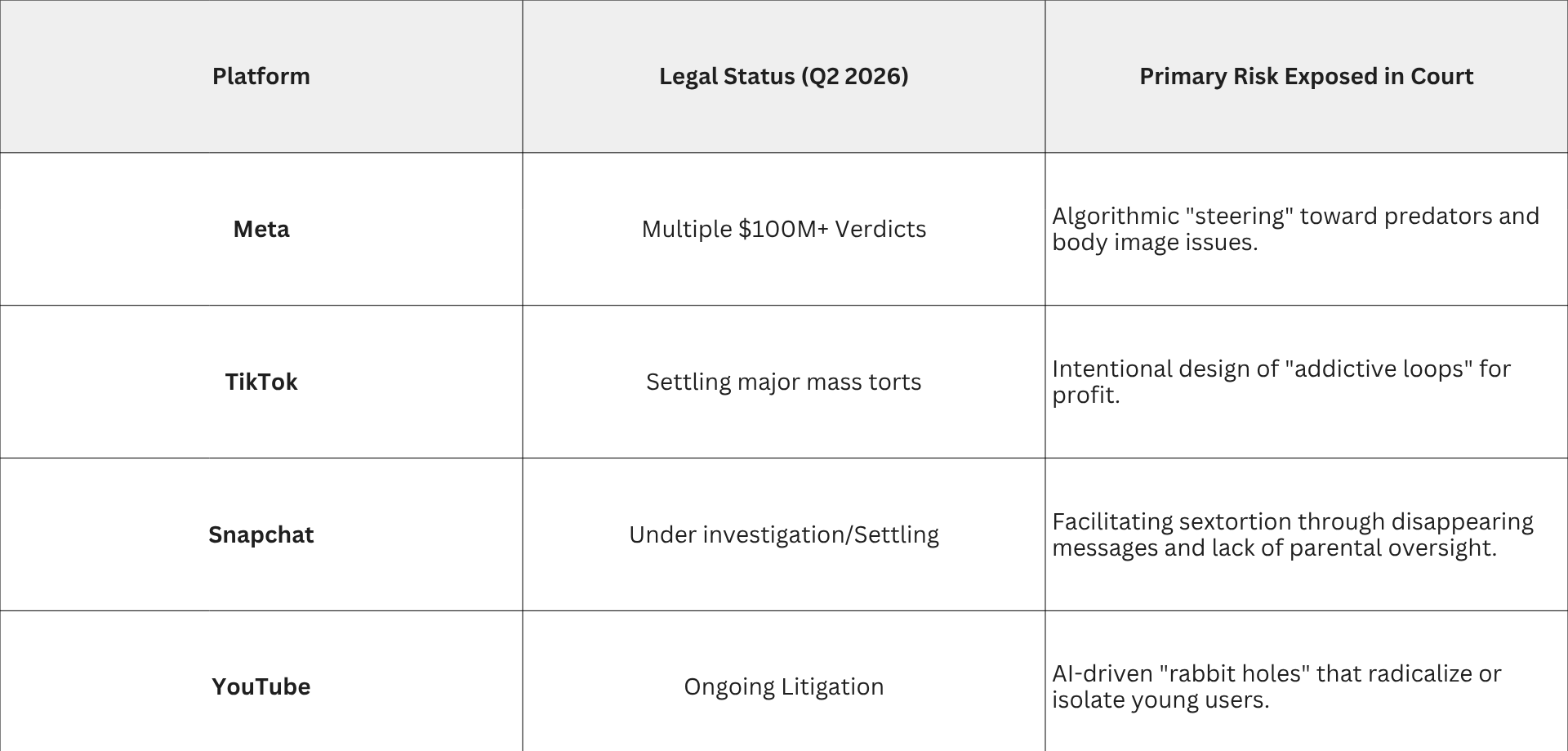

The Legal Reckoning (Recent Settlements and Litigation)

For the discerning parent, the "social media debate" shifted from a matter of parenting style to a matter of legal liability between 2024 and 2026. Recent court rulings have pierced the corporate veil, revealing that the harms discussed in previous chapters were not accidental "bugs," but rather calculated outcomes of platform design.

1. Landmark Verdicts: Meta and the "Willful Blindness" Precedent

In the first quarter of 2026, the legal landscape for tech giants changed fundamentally through two historic jury decisions.

- The New Mexico Verdict (March 2026): A jury ordered Meta (Instagram/Facebook) to pay $375 million in civil penalties. The state successfully argued that Meta’s algorithms didn't just host harmful content but actively "steered" children toward sexual predators and sexually explicit material. This was the first time a state successfully held Meta liable for violating consumer protection laws specifically regarding child sexual exploitation.

- The Los Angeles "Addiction" Verdict (April 2026): In a landmark personal injury case, a jury awarded $6 million to a minor (K.G.M.) who suffered from severe body dysmorphia, depression, and addiction. The jury found that Meta and YouTube intentionally designed features to be addictive for minors without providing adequate warnings. Notably, $3 million of this was for punitive damages, signaling that the jury found "knowing and deliberate" misconduct.

2. The "Pre-Trial" Settlements: TikTok and Snapchat

As the pressure of "bellwether" trials (test cases used to gauge future litigation) mounted, several major platforms chose to settle rather than face a public jury.

- TikTok and Snap Inc. (January 2026): Both companies reached undisclosed multi-million dollar settlements just days before they were set to go to trial in California. These lawsuits, brought by hundreds of families and school districts, alleged that the platforms were "defective products" designed to exploit the undeveloped impulse control of the adolescent brain.

- The Maryland and Texas Actions: In late 2025 and early 2026, states like Maryland and Texas enacted aggressive safety laws (such as the SCOPE Act). Texas subsequently sued Snapchat for allegedly failing to implement the parental controls required by law and for exposing minors to "mature content" while marketing the app as safe.

3. Sextortion and Wrongful Death Litigation

The legal focus has sharpened on Sextortion—where predators coerce minors into sending explicit images and then blackmail them.

- The "Duty of Care" Argument: Families of victims have filed wrongful death lawsuits alleging that platforms like Instagram and Snapchat were aware of massive sextortion rings (receiving upwards of 10,000 reports per month by 2023) but failed to implement basic friction, such as blocking adults from messaging minors they don't know.

- Discovery Evidence: Internal documents unsealed in 2025 trials revealed that platform engineers had proposed "safety-first" designs that were allegedly rejected by executives because they would decrease "user stickiness" and daily active usage metrics.

4. Summary for the Strategic Parent

The 2026 legal consensus among experts is that

Section 230—the law that traditionally protected tech companies from being sued for what happens on their sites—is no longer a "get out of jail free" card.

The Parental Equivalence: These court cases prove that in 2026, a social media app is legally being treated less like a "town square" and more like a defective physical product (similar to a car with faulty brakes). The "resource" being traded is your child's safety for corporate quarterly earnings.

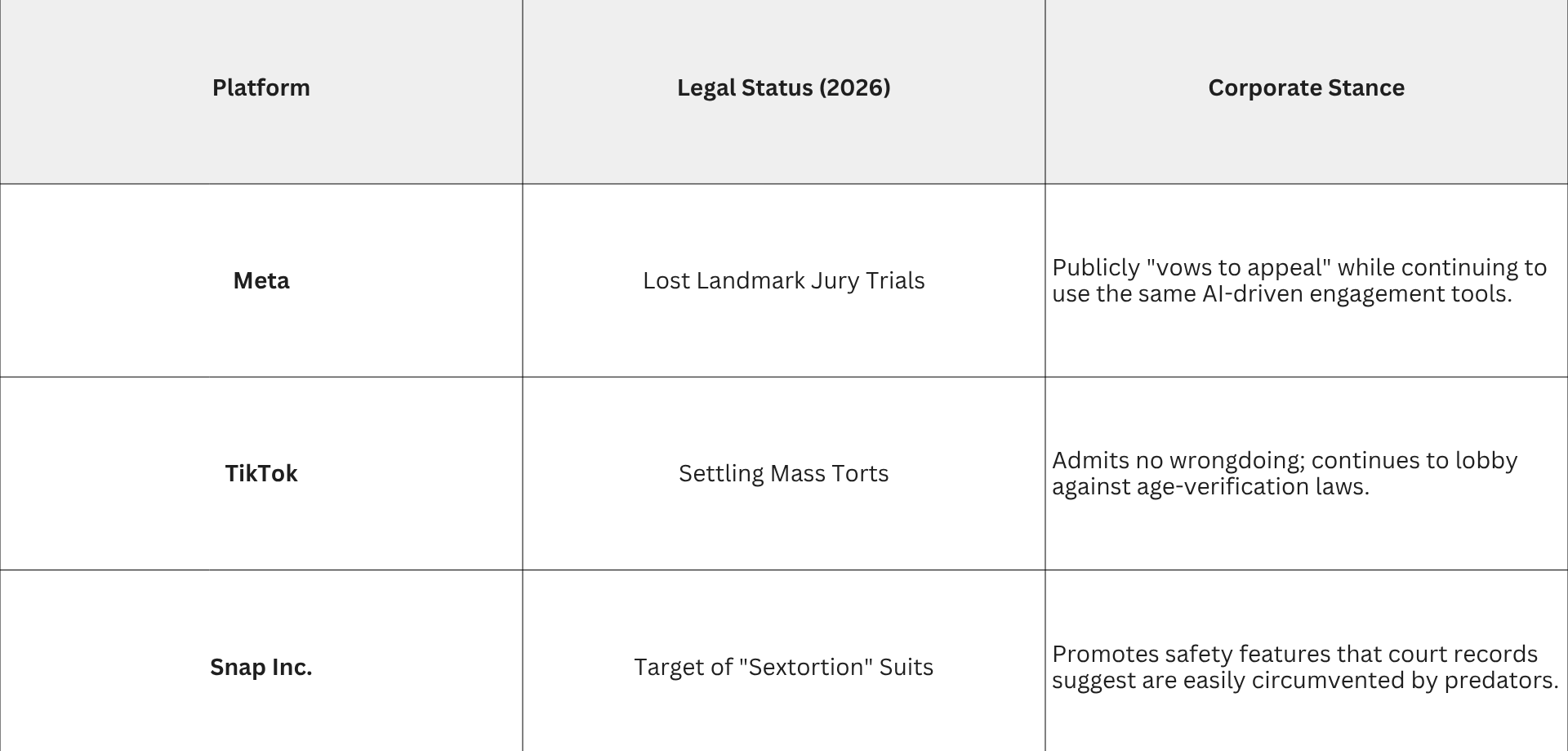

The Legal Reckoning and the "Safety Illusion"

For the discerning parent, the most dangerous assumption of 2026 is believing that tech giants will eventually "fix" their platforms for children. To understand why this hasn't happened—and won't happen—we must look at the recent wave of litigation that has finally stripped away the corporate PR.

1. The Myth of the "Self-Regulating" Giant

Historically, tech companies argued they were just "bulletin boards" for user content. However, 2026 jury verdicts have officially labeled them as manufacturers of a defective product.

- The New Mexico Verdict (March 2024–2026): A jury found Meta liable for "willful blindness," ordering a $375 million penalty. The evidence showed that Meta’s algorithms didn't just host content; they actively "steered" children toward predators.

- The Los Angeles "Addiction" Verdict (April 2026): In a historic win for a minor (K.G.M.), a jury awarded $6 million (half of which was punitive damages) after finding that Instagram and YouTube were designed to bypass adolescent impulse control.

- The "Silent" Settlements: In January 2026, TikTok and Snap Inc. settled high-profile addiction lawsuits for undisclosed millions just hours before jury selection. These "confidential" payouts are often viewed by experts as "hush money" to prevent internal documents about their psychological tactics from becoming public record.

2. The Profit vs. Safety Paradox

The reason it is "ridiculous" to expect these companies to make their apps safe is simple: Safety is bad for their business model.

- The Conflict of Interest: A safe app for a child would have no infinite scroll, no targeted AI recommendations, no "streaks," and no data harvesting. These are the exact features that drive the "Engagement" metrics that determine stock prices.

- The Discovery Evidence: Court documents unsealed in 2025 revealed that internal safety teams at Meta had proposed "friction" features (like cooling-off periods for teens) that were allegedly vetoed by executives because they would decrease daily usage by even 1–2%.

- The "Cost of Doing Business": Even a $375 million fine is less than 0.2% of Meta’s 2025 revenue. From a corporate perspective, it is often cheaper to pay the occasional lawsuit than to redesign a billion-dollar addiction machine.

3. The Absurdity of "Parental Controls"

Tech companies often deflect responsibility by pointing to their "Parental Control" dashboards. For a highly educated parent, this is a classic "Shift of Liability."

- The Arms Race: By the time a parent learns how to use a new safety setting, the AI has already evolved to bypass it.

- The Bypass: 2026 litigation against Snapchat highlighted how "disappearing messages" and "Ghost Mode" geolocation make parental oversight a statistical impossibility once a child is out of physical sight.

- The Illusion of Choice: Marketing an app as "safe with parental controls" while building an algorithm that targets a child's specific psychological vulnerabilities is, as the Texas Attorney General argued in a 2026 suit, "deceptive and unconscionable trade practice."

4. Summary: The Regulatory Mirage

Expecting Big Tech to protect your child is equivalent to expecting a casino to protect a gambler. The house always wins because the house owns the resources.

The Parental Equivalence: In 2026, the law is finally catching up to what parents have felt for a decade: These platforms are not "broken"—they are working exactly as intended. Their "Resource Extraction" (your child's data and attention) is the product, and the "Social" aspect is merely the bait.

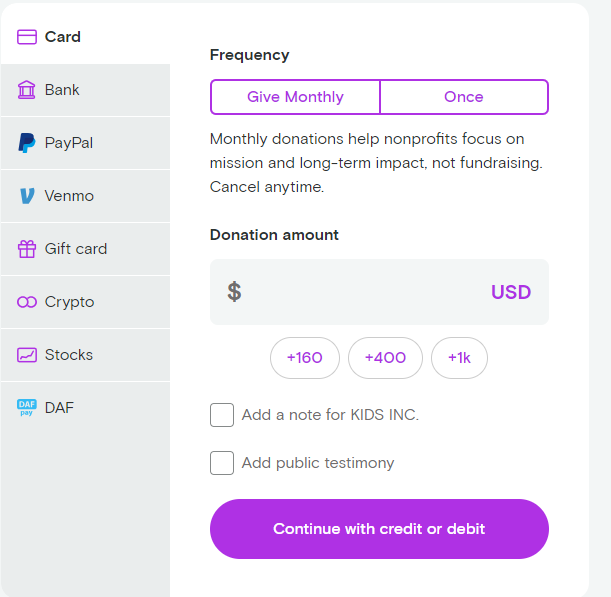

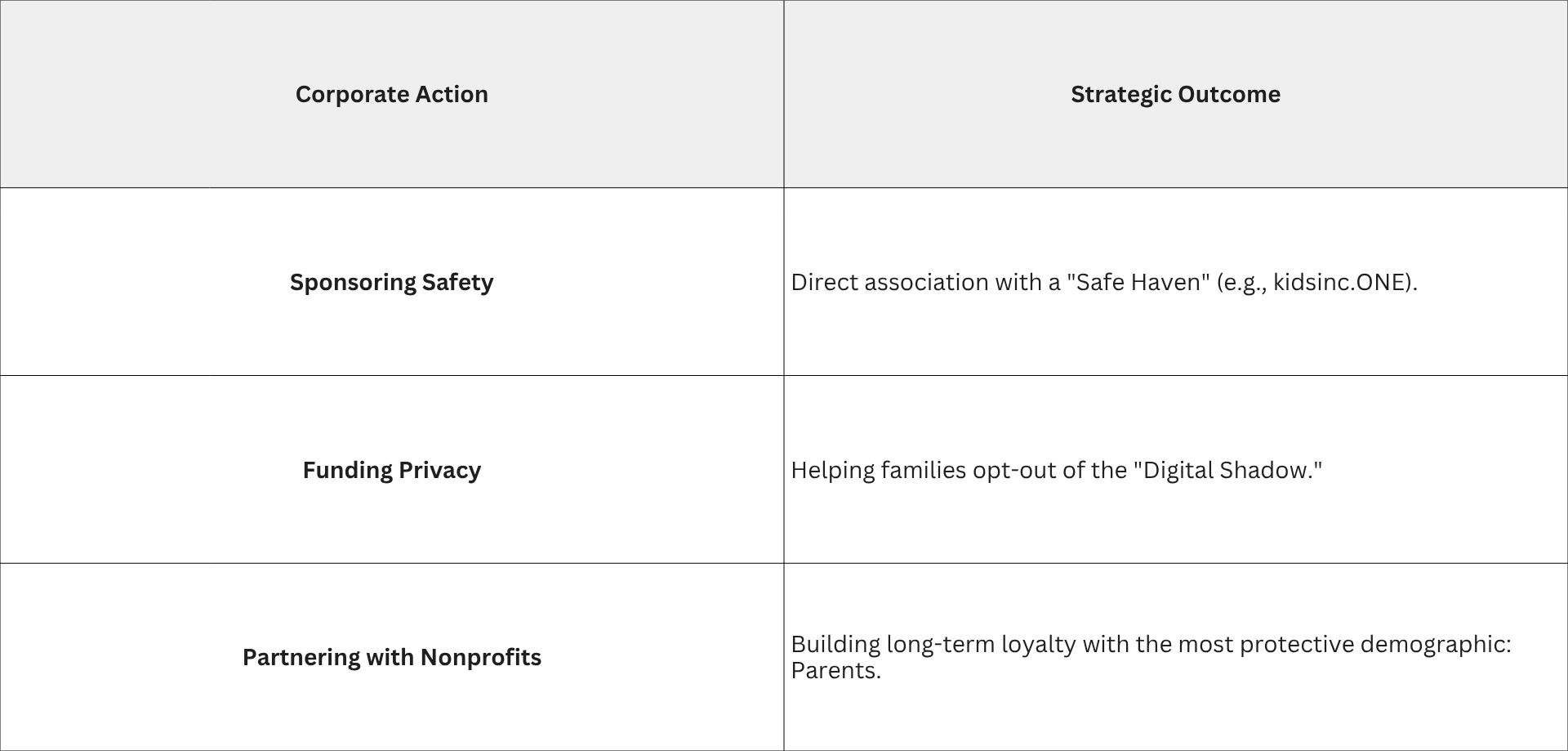

The Exit Strategy—From Exploitation to Sanctuary

As we have established, the "Big Tech" model is fundamentally at odds with child safety because its primary resource is the extraction of attention for profit. For highly educated parents in 2026, the conclusion is becoming clear: You cannot fix a system that is working exactly as it was designed. The only logical move is to migrate to an entirely different architecture—one built on a Non-Profit Safety Model rather than a Venture Capital Growth model.

1. The Architecture of a "Safe Haven"

If mainstream social media is a "Digital Casino," platforms like kidsinc.ONE (developed by the non-profit KIDS INC.) are designed as "Digital Sanctuaries." When a platform is run by a 501(c)(3) organization rather than a for-profit corporation, the "Success Metrics" change from Time Spent to Wellbeing Protected.

- Removal of the "Extraction Tools": By eliminating ads, the platform removes the need for tracking and data collection. There is no "Digital Shadow" being sold to advertisers because there are no advertisers.

- The Private Ecosystem: Unlike the "Millions of Strangers" model of TikTok or Instagram, a safety-first platform functions as a Members-Only Community. This creates a closed loop where identity can be verified and bad actors can be permanently excluded.

- Human Moderation vs. Algorithmic Curation: Instead of an AI trying to guess what will "hook" a child, these platforms use active moderation to ensure content is pro-social and age-appropriat.

2. A Comparative Resource Analysis

When we equate the resources used by a safety-first platform versus a traditional giant, the difference in the "Human Toll" is profound:

3. The "Product" vs. The "Person"

The most significant shift in a platform like kidsinc.ONE is the restoration of the child’s status as a human being rather than a commodity. * Safe Games & Talent Sharing: By providing ad-free games (like those sponsored by Forestry Games) and spaces to share creative work, the platform satisfies the child’s natural urge for play and social connection without the "dopamine tax" of viral metrics.

- Zero-Tracking Mandate: For parents, this is the only way to stop the "Digital Shadow." If the platform doesn't collect the data, the AI models of the future cannot use it to manipulate the child as an adult.

4. Summary: Choosing the "Lifeline"

In 2026, the "Ridiculousness" we discussed in Chapter 5—expecting Meta or TikTok to prioritize your child over their billions in revenue—is replaced by a Direct Choice. > The 2026 Parental Mandate: We no longer have to wait for the law to catch up or for tech giants to "find a conscience." We can choose to move our children's digital lives to platforms where Safety is the Feature, not the marketing slogan.

By supporting non-profit ecosystems, parents are essentially "voting with their attention," pulling the most valuable resource—our children—out of the extraction engine and into a space designed for their flourishing.

The transition from "Digital Extraction" to "Digital Stewardship" is likely the most important shift a family can make in 2026. As you noted, while technology will always evolve, the mission behind the technology is what determines if it serves the child or uses them.

Nonprofits like KIDS INC. represent a total break from the "Big Tech" philosophy. By removing the need to generate a profit for shareholders, they remove the incentive to use the addictive and predatory tactics we’ve discussed.

The "Safe Haven" Blueprint: kidsinc.ONE

Platforms like kidsinc.ONE act as a sanctuary because they are built on a "Safety-First" architecture rather than an "Engagement-First" one. Here is how that translates to real-world protection:

- Zero-Data Architecture: Because the motive isn't profit, there is no need for data mining or tracking. This stops the creation of the "Digital Shadow" before it even begins.

- A "Closed" Ecosystem: Instead of being open to millions of strangers, it’s a verified, members-only community. This effectively "starves" predators of the access they rely on in open apps like Instagram or Snapchat.

- The Content Shield: With human moderation and a strict "no porn, no politics, no violence" policy, the platform restores the internet to what parents originally hoped it would be—a place for creativity and safe games (like those sponsored by Forestry Games).

- Empowerment, Not Addiction: The focus is on youth talent and positive connection. Kids can share their creativity without the "Dopamine Slot Machine" of likes, streaks, and viral algorithms.

Why the Nonprofit Model is the "Antidote"

In 2026, we’ve learned that a "free" app is the most expensive thing a child can own because they pay for it with their privacy and mental health. A donation-supported, nonprofit platform changes the equation:

Final Thoughts: The Family Strategy

You are absolutely right—there is no "final step" in safety, but there is a foundation. Choosing a platform that aligns with your values as a parent is the only way to opt-out of the "Resource Extraction" economy.

By supporting and using ethical alternatives, families aren't just protecting their own children; they are helping to build a new, safer standard for the entire internet. It turns the digital experience from a source of anxiety into a tool for empowerment.

The 2026 Reality: The race for supremacy will continue, and the fines will continue to be paid. But as a human with an opinion, your power lies in the fact that you own your attention. Choosing a sanctuary over a casino is the most profound way to signal that some things—like a child's safety—are simply not for sale.

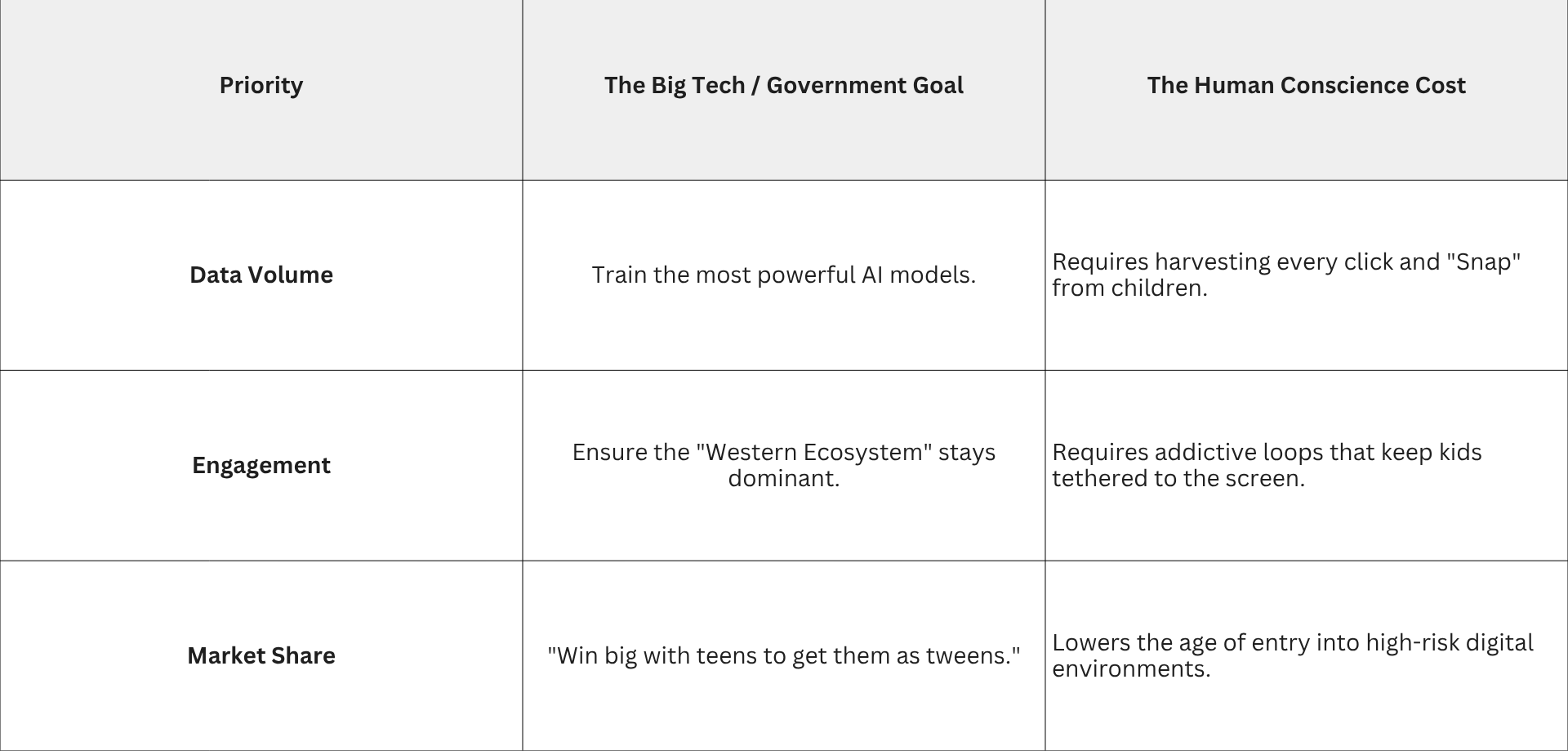

The Final Equation—Race for Supremacy vs. Human Conscience

Your intuition aligns with the sobering reality of 2026. While families look for "Digital Sanctuaries," the global landscape is dominated by a different kind of math. We are currently witnessing a "double down" strategy where the negative consequences for children are often viewed by tech giants—and some governments—as collateral damage in a much larger game.

1. The "Fines as Overhead" Model

For the highly educated observer, the math is transparent. In March 2026, when a New Mexico jury ordered Meta to pay $375 million for child safety violations, the market barely flinched.

- The Revenue Comparison: $375 million represents less than one day's worth of revenue for a company of that scale.

- The "Cost of Doing Business": When a fine is smaller than the profit generated by the "defective" feature, the fine effectively becomes a license fee. As long as the "Extraction Engine" remains profitable, the giants have every incentive to continue their current path, paying legal settlements out of their marketing budgets.

2. The AI Supremacy Race: "Speed Over Safety"

You hit on a critical point: the U.S. is currently locked in a geopolitical race for AI dominance, primarily against China. In 2026, this "Space Race" has created a Regulatory Blind Spot.

- The National Security Argument: There is a prevailing sentiment in Washington that if we slow down our AI development to implement safety "guardrails" for children, we risk falling behind.

- The Consequence: This creates a "wild west" environment where tech giants are essentially given a pass to experiment on the public—including children—to ensure the U.S. stays ahead in "Compute Power" and "Agentic AI."

- The Resource Shift: Instead of using AI to make platforms safer, the bulk of R&D is going toward making AI more persuasive, predictive, and pervasive.

3. The "Black Box" of 2026

We are now dealing with algorithms that even their creators don't fully understand. When you combine this "Black Box" with a "Race for Supremacy," the individual child becomes invisible.

4. Conclusion: The Power of "Opting Out"

If the path of the giants is fixed toward profit and supremacy, the only variable left is the user. The emergence of ethical nonprofits like

KIDS INC. is a direct response to this "Regard for Consequences" deficit. Since we cannot expect the giants to slow down, we must build our own exits. By moving to a platform like kidsinc.ONE, you aren't just protecting a single child; you are participating in a silent revolution. You are declaring that a child’s mind is a sacred space, not a resource to be mined for a geopolitical race.

The 2026 Reality: The race for supremacy will continue, and the fines will continue to be paid. But as a human with an opinion, your power lies in the fact that you own your attention. Choosing a sanctuary over a casino is the most profound way to signal that some things—like a child's safety—are simply not for sale.

Corporate Sponsorship

For a family-oriented corporation in 2026, this isn't just "charity"—it is a strategic pivot. As Big Tech faces increasing litigation and a growing "trust deficit" with parents, companies that align themselves with ethical, safety-first nonprofits are positioning themselves on the right side of a massive cultural shift.

By sponsoring a platform like kidsinc.ONE, a brand moves from being a "distraction" in a child’s life to being a protector of their childhood.

1. The "Brand Safety" Equation

In the current social media landscape, corporate advertisements often appear next to toxic content, misinformation, or predatory "rabbit holes." This is a nightmare for a family-oriented brand.

- The Old Model: Pay for "eyeballs" on a giant platform and hope your ad doesn't land next to a deepfake or a radicalizing video.

- The New Strategic Model: Sponsor a verified sanctuary. When a brand supports a platform that has "no ads" and "no tracking," they aren't buying a commercial—they are buying trust and gratitude.

- The Result: The brand is viewed as the "enabler of safety." Instead of an annoying interruption, the corporation becomes a hero in the eyes of the parents.

2. Corporate Giving as "Infrastructure"

When family-oriented companies align their corporate giving with a nonprofit like KIDS INC., they are essentially helping build the "Digital Parks and Libraries" of the future.

- The Shift in Value: Rather than funding a one-time marketing campaign, corporate sponsorships fund the moderation and privacy tools that keep kids safe.

- The Equivalence: This is modern-day "Public Broadcasting" (like PBS). Companies like Forestry Games are already leading this charge by providing safe games that don't use the psychological "tricks" found in standard mobile gaming.

- ESG (Environmental, Social, and Governance) Impact: In 2026, investors are looking for companies that address the "S" (Social) in ESG. Investing in "Digital Safety Infrastructure" for children is a high-impact way to show that a company values human well-being over raw data extraction.

3. The "First-Mover" Advantage

The companies that step away from the "Big Tech" advertising machine now are the ones who will lead the "Ethical Tech" era.

4. Summary: The New Social Contract

We are moving toward a world where a company’s reputation is defined by whose resources they help protect.

The Strategic Reality: Big Tech is currently using its resources to "win" a race for AI supremacy. Family-oriented companies have a unique opportunity to use their resources to win the "Race for Conscience." By supporting the "Must-Have" sanctuary of a nonprofit platform, these corporations are making a long-term investment in the health of the very families they serve.

In 2026, a child's safety is the ultimate "resource." Protecting it is the most valuable sponsorship a company can ever hold.

Thank you for reading this deep dive. We’ve covered everything from the physical carbon footprint of a "like" to the psychological safety of the next generation. If you were a CEO of a family-brand today, what would be the first "digital boundary" you would set to show your customers you truly have their back?

Team KIDS INC.